By Zainab Imran | AI Development, Accessibility, Women in STEM

There is a moment in every project when you stop thinking about the code and start thinking about the person on the other side of it. For me, that moment came at 2am, somewhere between debugging an OpenCV pipeline and realizing that the system I was building could if I got it right give someone independence they had never had before.

That project is AURA_AI. And building it changed everything about how I understand artificial intelligence.

What is AURA_AI?

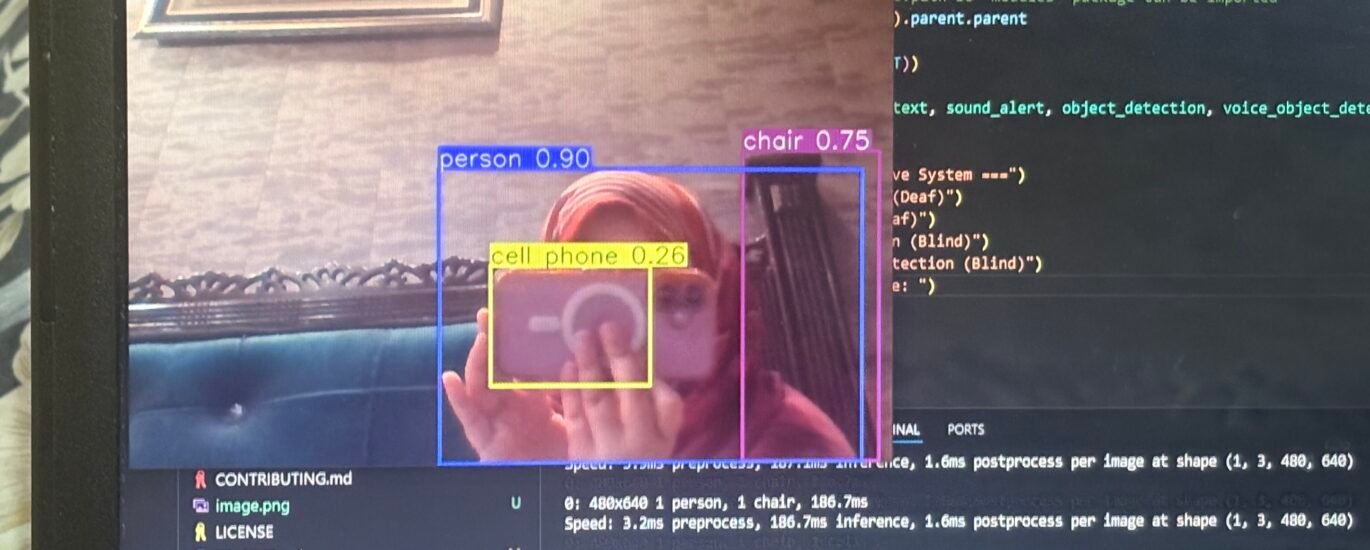

AURA_AI is a real-time assistive system designed for people with visual and hearing impairments. Built in Python using OpenCV, text-to-speech synthesis, and speech-to-text recognition, it combines computer vision-based gesture recognition with contextual audio feedback to help users navigate their environment without relying on sight or hearing alone.

It is fully open-sourced, published under an MIT license, and designed specifically to run on low-cost hardware because the communities who need assistive technology most are rarely the ones who can afford premium devices.

“The gap in assistive technology is not technical it is economic. The tools exist. The question is whether we build them for the people who cannot afford the existing ones.”

The Engineering Decisions That Actually Mattered

Most technical writing focuses on what worked. I want to tell you about what was hard, because the hard parts are where the learning is.

The biggest challenge was not building the gesture recognition model; it was making it fast enough and small enough to be useful on hardware that costs under $100. A model that achieves 99% accuracy on a research-grade GPU is useless to someone in a rural district in Pakistan who owns a basic Android device. So every architectural decision I made was filtered through one question: does this still work on low-cost hardware?

After extensive testing and optimization, the final pipeline achieved 87% gesture recognition accuracy in controlled testing, not perfect, but deployable and improving with each iteration. The inference size was reduced significantly through model compression techniques, and the system was designed with a silent mode and full keyboard navigation from the start, not as an afterthought.

What I Am Building Next

The version I published is the beginning. What I want to build next is a lightweight multimodal system capable of translating Pakistani Sign Language and narrating environments in Urdu version that does not just work for a generic global user, but for the specific communities in Pakistan where 5.5 million people live with hearing or visual impairments and where most existing assistive tools were designed for English speakers with reliable internet connections.

This is why I am pursuing an MSc in artificial intelligence. Not to study AI in abstraction but to return with the research depth to build the next iteration properly.

What Building AURA_AI Taught Me About Being a Woman in AI

I am a Pakistani woman. I built this system alone, as an undergraduate, with no research lab, no institutional funding, and no team. I am telling you this not for sympathy but because it is relevant: the barriers to entry in AI research are not evenly distributed, and the people who most need AI systems built for them are often the people least represented in the rooms where those systems are designed.

AURA_AI is, in part, my answer to that problem. Every line of code I contribute to this project is an argument that the pipeline for who gets to build AI needs to be wider.

If you are a developer working on accessibility technology, I would love to collaborate. The GitHub repository is open contributions, issues, and conversations are all welcome.

GitHub: github.com/zai-codes/AURA_AI